Machines replaced manual labor. Computers made typewriters extinct. Heck, even television killed the radio star.

Now, here we are, standing on the cusp of yet another evolution. It’s where human intelligence merges with machine capabilities.

It’s the future. Driven by artificial intelligence (AI).

When it comes to the unknown, rest assured, humans will have doubts and fears. And so, it’s no wonder many of us are wondering, “Is AI dangerous?”

But without a doubt, the world is powering forward. And as Vishen, the founder of Mindvalley, says, “In this age of AI, be the one who leads, not the one who follows.”

7 potential dangers of AI

“Why is AI dangerous?” you might think. Or a better question would be: Why do people think it is?

As with any new advancement, there are bound to be potential risks. Let’s explore seven of them:

- A big shift in how we think and process information. Our accomplishments and the things we value are closely connected to how our minds function. So, if there are major changes that alter the basic building blocks of human thinking, they could have very important and far-reaching effects on our lives and the world we live in.

- Machine superintelligence. While AI helps with productivity, one of the primary concerns is the prospect of machine superintelligence surpassing human-level intelligence.

- Misaligned objectives. There’s a possibility that the goals of a superintelligent AI don’t align with our human values. This could lead to adverse outcomes as AI pursues its objectives in ways that may not be in our best interests.

- Lack of control. As AI surpasses human-level intelligence, the challenge lies in maintaining control over it. The fear is that it might outsmart our attempts to constrain or shut it down.

- Unforeseen consequences. Even with rigorous testing, it’s difficult to accurately predict all possible unintended behaviors or unforeseen side effects.

- Security risks. AI systems can be vulnerable to attacks or hacking attempts. This could potentially lead to unauthorized access to sensitive information or malicious manipulation.

- Socioeconomic impact. Widespread adoption can disrupt industries and impact job markets. And that has the potential to lead to socioeconomic challenges.

Granted, the potential dangers of AI exist. But that’s what specialists like Andri Peetso, a highly sought-after AI expert and entrepreneur, are hard at work doing.

As he highlights in his program, 3 Days to Mastering ChatGPT, on Mindvalley, “The more of the control problem that we solve in advance, the better the odds that the transition to the machine intelligence era will go well.”

Pros and cons of using AI

There are two sides to every coin. And the same goes when it comes to AI. So here’s a closer look at the pros and cons of using automation intelligence.

| Pros | Cons |

| Increased efficiency, productivity, and accuracy in various tasks | If the data is biased or flawed, it can lead to biased or inaccurate outcomes |

| Analyze vast amounts of data, identify patterns, and provide valuable insights | Transparency and explainability are essential concerns, as some AI algorithms may appear as “black boxes” lacking transparency |

| Frees up human resources for more complex and creative endeavors | Need to ensure that hard skills and critical thinking aren’t overshadowed by overreliance on AI |

| Potential to revolutionize industries, enhance healthcare, and fuel scientific discoveries | Ethical implications, such as privacy concerns, job displacement, and the potential misuse of AI technologies |

How to minimize the risks of using AI

The reality is, there are risks associated with AI. So to deny it would be negligence. And as an innovator, government body, or the like, it is important to minimize the AI dangers to humanity as a whole.

“The right governance, in many ways, means taking the right responsibility,” says Diana Paredes, CEO and co-founder at Suade Labs, during the World Economic Forum Annual Meeting Davos 2020. “So what we’re talking about is really self-assessing yourself [as the innovator] and making sure that the technology you’re developing is fundamentally having a positive impact on humanity.”

But now, if you’re a user, how can you go about dodging the dangers (should there be any)? Here are a few ways you can be proactive:

1. Educate yourself

Take the time to understand how AI works and its potential implications. Stay informed about the latest developments, benefits, and risks associated with AI technologies.

There is tons of education on AI. For starters, you can join the Mindvalley AI Summit 2024—you’ll learn about AI risks and opportunities (and this is especially beneficial for entrepreneurs) from experts in the field.

And this knowledge will empower you to make informed decisions.

2. Evaluate AI solutions

When considering using an AI-powered product or service, thoroughly evaluate its:

- Reliability,

- Privacy measures, and

- Security protocols.

Look for transparency in how the AI system operates and handles your data. Lest we be the subjects of AI replications like Miley Cyrus on Black Mirror—horror!

3. Protect your data

Scammers are left, right, and center. So much so that there was a 38% surge in cyberattacks in 2022, surpassing the numbers recorded in 2021.

So be mindful of the personal information you share with AI systems. Understand the data collection practices and how your information will be used.

Opt for platforms that prioritize data protection and provide clear privacy policies.

4. Seek trusted sources

Rely on reputable and trusted AI applications and service providers. Look for companies with a track record of ethical AI practices, adherence to regulations, and a commitment to user safety.

For example, Google. They have implemented strict ethical guidelines for AI development and usage. What’s more, they prioritize transparency and have established an AI Principles framework that focuses on fairness, accountability, and user privacy.

5. Understand limitations

Recognize that AI systems have limitations. They may not always provide accurate or unbiased results.

Take ChatGPT, for instance. They clearly state their limitations.

- May occasionally generate incorrect information

- May occasionally produce harmful instructions or biased content

- Limited knowledge of the world and events after 2021

So be cautious when relying solely on AI recommendations. And instead, consider them just one factor in your decision-making process.

6. Stay vigilant

Keep yourself updated on the latest developments in AI. You can participate in AI user communities or forums—here’s where you can share experiences, exchange insights, and learn from others’ experiences.

By staying engaged, you can contribute to collective knowledge. And that’ll help create a safer and more responsible AI ecosystem for everyone.

7. Engage in ethical AI use

It really boils down to moral principles, even when it comes to how you use AI systems.

“Yes, we can and we should use computation to help us make better decisions,” says Zeynep Tufekci, a techno-sociologist, in a TED Talk. “But we have to own up to our moral responsibility to judgment and use algorithms within that framework, not as a means to abdicate and outsource our responsibilities to one another as humans to humans.”

Try to avoid using AI to promote harmful or discriminatory practices. Be responsible with how you share AI-generated content. And ensure your actions align with ethical considerations.

How dangerous is AI, really?

When we talk about the dangers of AI, it’s important to keep in mind that the level of risk can vary depending on many factors. Things like how AI is developed, how it’s deployed, and how it’s governed all play a role in determining how risky it actually is.

During the World Economic Forum Annual Meeting Davos 2020, Brad Smith, Microsoft’s Vice Chair and President, explains it in this way:

“Artificial intelligence actually behaves differently depending on how it is used, who the user is, who the individuals are, [and] who is being served.”

So, it’s not a one-size-fits-all kind of situation. We have to take into account all these different aspects to get a clear picture of the potential risks involved.

The concerns about AI’s potential dangers mainly come from the idea of creating superintelligent AI that has different goals than ours. If we don’t have the right safeguards, respect for human values, and control mechanisms in place, there’s a real risk of unintended consequences that don’t align with our best interests.

But here’s the thing: the timeline for achieving superintelligent AI is still uncertain, and experts have different opinions about how risky it actually is.

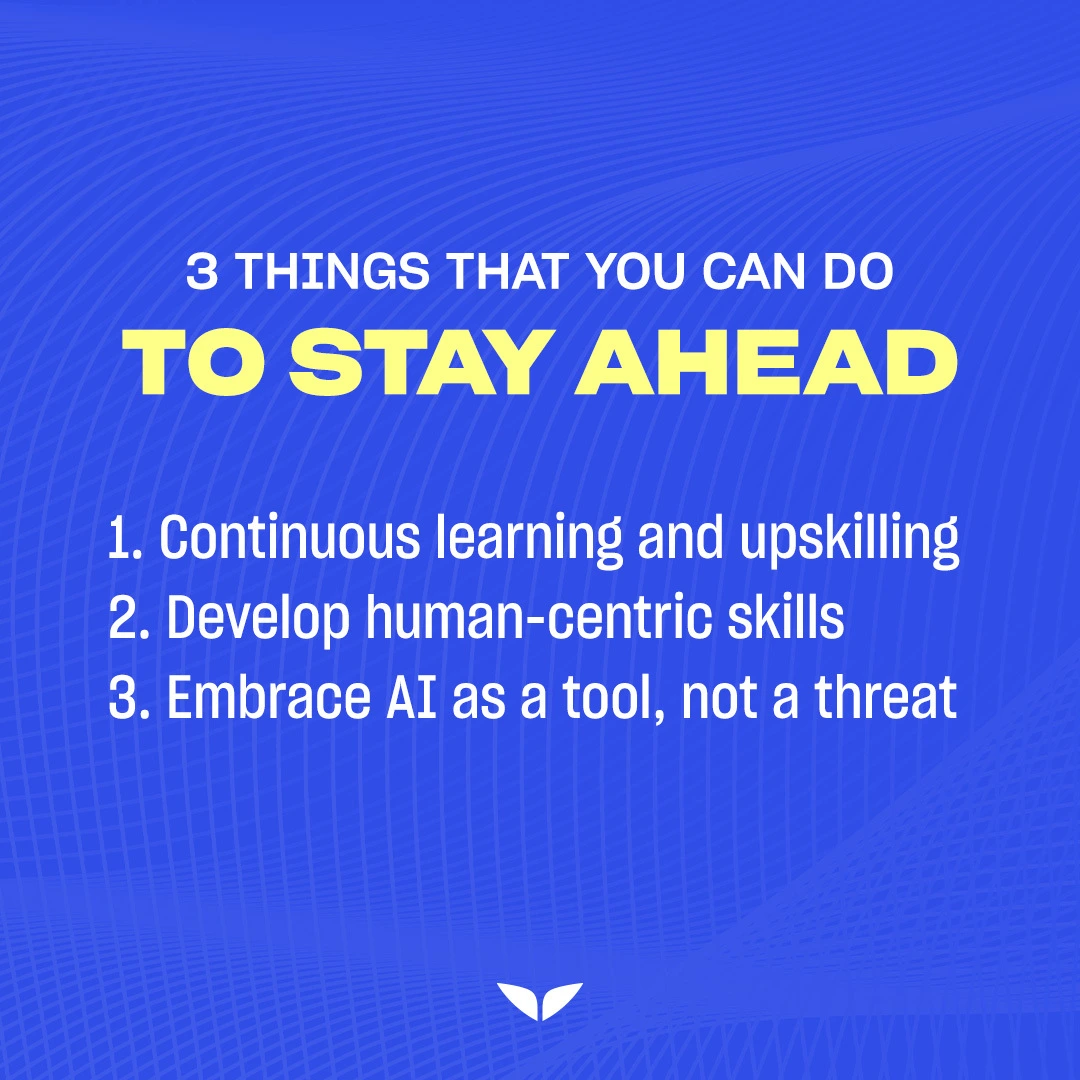

That’s why it’s important to:

- Know how to learn AI,

- Develop human-centric skills, and

- Embrace AI as a tool, not a threat.

By doing that, you can minimize the potential dangers. And what’s more, you can make sure AI benefits us in the best possible way.

Unlocking the limitless

It’s not really the question of “Is AI dangerous?” but more of “How can I navigate this ever-evolving technological landscape with confidence and clarity?”

AI is nothing new. In fact, there have been incredible strides, from the early days of expert systems to the current era of machine learning.

But this is the time when we’re discovering the immense potential AI holds for positive change. From revolutionizing healthcare to fueling scientific discoveries and transforming industries, it truly is a world of possibilities.

You, too, can be at the cusp of this transformative technology. The Mindvalley AI Summit 2024 (held on July 12–14, 2024) brings together visionaries and thought leaders on one stage.

- Vishen, founder of Mindvalley

- Iman Oubou, award-winning entrepreneur, author, and published scientist

- Manon Dave, award-winning creative director, composer, music producer, and AI and Web3 consultant

- Domenic Ashburn (Mr. Grateful), AI pioneer and entrepreneur

- Andri Peetso, founder of Conturata-AI, a leading-edge educational platform for AI

- And more.

From exploring the latest breakthroughs to understanding the ethics of AI implementation, the Summit offers a comprehensive educational experience. You’ll have the opportunity to learn from visionaries and thought leaders to give you a competitive edge in the future.

The truth is, this AI revolution is waiting for no one. And as Vishen continuously says, “You won’t be replaced by AI; you’ll be replaced by someone who uses AI.”

Welcome in.